The Show-Me State Meets the Ghost in the Meyer AI Machine in the New Safe Harbor Michael Corleone Style – Music Technology Policy

There’s a club in DC that may or may not exist whose members are guys who do things with stuff. They might be called The Cockroaches—if they exist, of course. Why did they choose that name? Because they’re the ones who survive anything, including a nuclear holocaust. They have the jobs you don’t know about, what some people call the deep state. And there’s a political version of The Cockroaches who are the people at the highest levels who make things happen that affect your life. Ever wonder why a certain policy got killed or promoted at the state or city level? Why did that data center get built in that place, why is a massive grotesque power line carving up the Texas Hill Country?

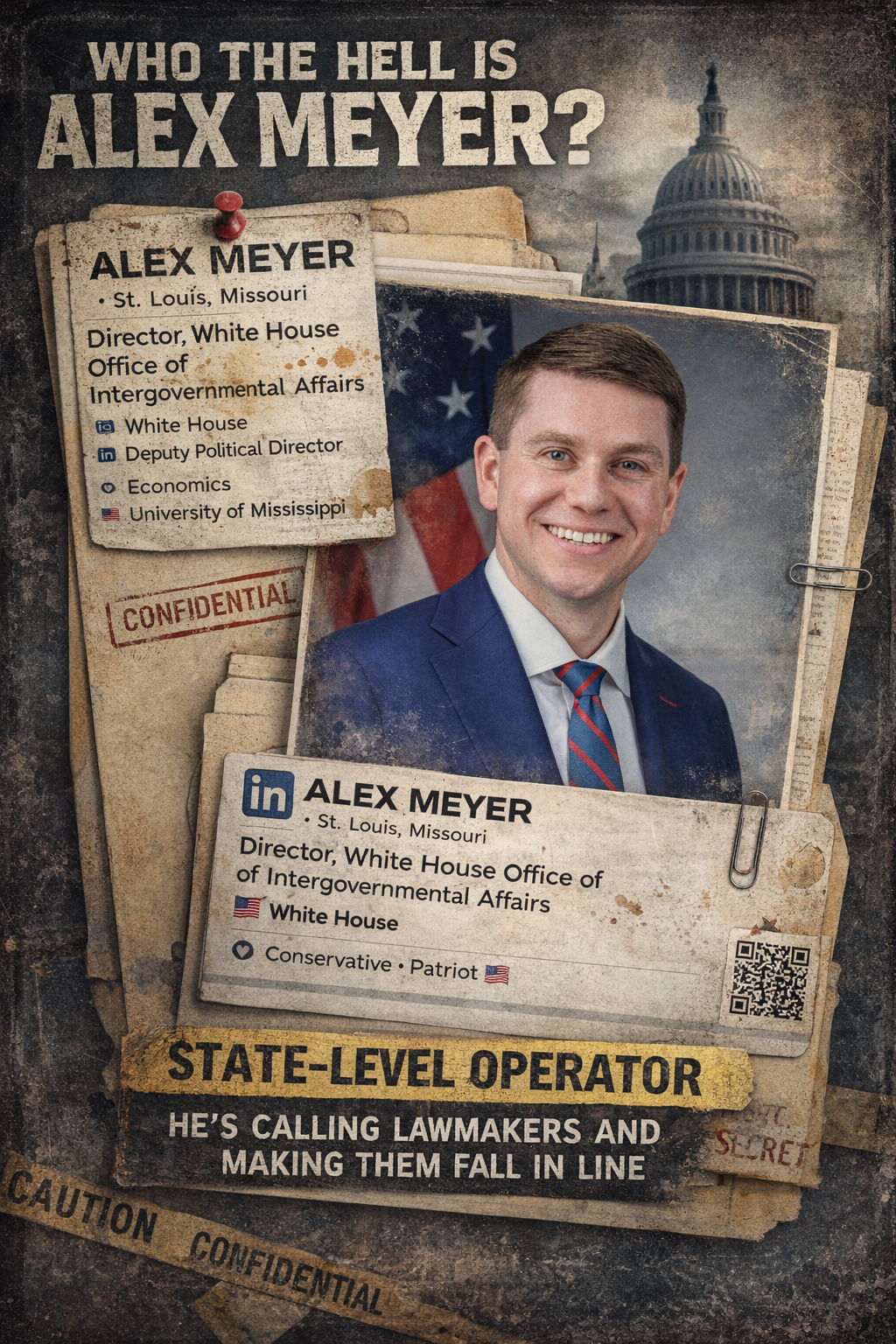

You probably haven’t heard the name Alex Meyer. He’s not on TV. He’s not drafting white papers or giving keynote speeches about AI. But he’s exactly the kind of person you’re thinking about when you ask those questions. As the White House’s point person for intergovernmental affairs, his job is to call state legislators, governors, and local officials and make sure they understand where the administration stands. Not in theory—in practice. Not in public—on the phone. He’s not the architect of the policy. He’s the one who shows up when something needs to move, or stop moving, and makes sure the message lands. And that means that even if you’ve never heard his name, the decisions he touches can shape what laws pass, what projects get greenlit, and what rules end up governing your daily life.

Alex Meyer is a St. Louis native and De Smet Jesuit graduate who came up through Missouri Republican politics and fundraising before moving into national campaign and White House roles. If that doesn’t say “bagman” to you, guess what he’s doing now? You know…intergovernmental affairs.

Meyer’s operation starts to look like a kind of safe harbor with teeth. The formal message is not “you must repeal your bill.” The message is softer than that, more conditional, more deniable: narrow it, weaken it, or risk consequences somewhere else, whether in funding, political support, or legislative momentum. That is what makes it function like a safe harbor but Michael Corleone-style. States are invited to avoid conflict if they stay within boundaries the White House finds acceptable. But it has teeth because the choice is not cost-free. Step outside the lane, and the pressure begins. That’s a nice state you got there, be a shame if something happened to it.

Missouri

And the prodigal son returns for his fattened calf. In Missouri, the pressure campaign appears to have worked through a combination of government funding leverage, internal legislative resistance, and direct White House contact.

According to the Missouri Independent:

The promise and peril of artificial intelligence have been a recurring theme for Missouri lawmakers this year, as they debate safeguards on campaign advertisements, companion chatbots and mental health therapy that use the technology.

But efforts to enact regulations in Missouri stalled last week in the state Senate amid fears the legislation could jeopardize nearly $900 million in remaining federal broadband funds for rural internet expansion.

The underlying bill, sponsored by GOP state Sen. Joe Nicola of Grain Valley, would specify that liability for harm caused by an AI system always resides with a person or organization — whether it’s the company that designed and created the system or an individual who used it. Courts would decide where liability lies in specific cases.

The bill would also prohibit AI from being granted legal personhood. People would be prohibited from marrying an AI partner, and AI could not own property or be an officer of a corporation.

“We don’t want anybody, any company or any person, to be able to blame the machine or blame the AI,” Nicola said.

An amendment sponsored by Republican state Sen. Brad Hudson of Cape Fair would require age verification to restrict minors’ use of AI chatbots and make it unlawful to develop or publish chatbots likely to encourage minors to engage in self-harm or sexual conduct.

Another amendment, sponsored by Senate Minority Leader Doug Beck, a Democrat from Affton, would prohibit the use of AI to prescribe medication or controlled substances, while Republican state Sen. David Gregory of Chesterfield attached an amendment banning nondisclosure agreements in lawsuits stemming from the bill.

But the debate turned quickly to broadband funding, with lawmakers warning the bill could run afoul of President Donald Trump and jeopardize federal money for rural high-speed internet access.

And guess who delivered that message? Hometown-boy-made-good and David Sacks minion Alex Mayer. Yep—”show me” the money.

The Missouri Independent reported that Sen. Joe Nicola’s AI bill stalled after other Republicans warned it could trigger the loss of nearly $90 million in federal broadband funds under the administration’s push against “onerous” state AI regulation. Daily Signal then added a more detailed account from Nicola himself: he said he spoke directly with White House Intergovernmental Affairs Director Alex Meyer, told him he was trying to put “some guardrails” in place without “hindering innovation,” and was told the bill was overly broad and that some penalty provisions were “a bit steep.”

Nicola said he was already working on the 11th version of the bill to address White House concerns, while also objecting to the pressure, saying, “I’m very frustrated, to be quite honest,” and, more sharply, “I’d take great offense that the president would threaten us by withholding federal funds from protecting our people.” Taken together, the reporting suggests that in Missouri the White House effort was not just rhetorical opposition to AI guardrails, but an active attempt to soften a state bill by linking it to federal funding risk and channeling that message through both state lawmakers and a direct call from the White House from Meyer.

MTP readers will remember that David Sacks and Adam Thierer tried this in the open with the OBBBA AI moratorium—tie state regulation to the risk of losing federal funding and dare lawmakers to resist thanks to Texas Senator Ted Cruz. That effort collapsed in Senate and was voted down 99-1, a defeat that was so bad, Cruz voted against his own bill to get out of the way of the self-own.

So now, like those cockroaches, the strategy doesn’t die—it just scatters into the walls. In Missouri, the exact same playbook shows up again, this time not in a bill, but in a phone call. State Sen. Joe Nicola said his AI safeguards bill stalled after fellow Republicans warned it could put nearly $90 million in federal broadband funding at risk. He then spoke directly with White House Intergovernmental Affairs Director Alex Meyer, explaining he was trying to put “some guardrails” in place without “hindering innovation.”

According to Nicola, Meyer pushed back that the bill was “overly broad” and that some penalties were “a bit steep.” By that point Nicola said he was already on the 11th version of the bill to address those concerns, even as he publicly bristled at the pressure: “I’m very frustrated, to be quite honest,” and more bluntly, “I’d take great offense that the president would threaten us by withholding federal funds from protecting our people.” Separate reporting confirmed the funding threat dynamic, noting the bill’s collapse amid warnings about losing broadband support.

Put it together and the pattern is hard to miss: what couldn’t be imposed from Washington through legislation is now being pursued through leverage, quietly, state by state, call by call.

Tennessee

Tennessee is an especially telling place to start, not least because one of its senior federal officials has taken a leading role in pushing back against efforts to centralize AI policy in Washington. Senator Marsha Blackburn emerged as a prominent opponent of the proposed federal AI moratorium, arguing that states should retain the ability to set guardrails around rapidly evolving technologies rather than be sidelined by a one-size-fits-all federal approach. In fact, Senator Blackburn was a major force in stopping the first round of the “moratorium” in the Senate, which was the brushback pitch that put David Sacks in his place early on and stripped his AI maximalist proposal right out of President Trump’s must pass One Big Beautiful Bill Act.

Against that backdrop, Tennessee’s own legislative effort—SB 2171—looks less like an outlier and more like a natural extension of that philosophy: a state-level attempt to impose transparency, child-safety protections, and basic governance obligations on advanced AI systems not to mention a companion to Tennessee’s digital replica law, the ELVIS Act. The tension, then, is not abstract. It is immediate and local. Even as Tennessee’s federal delegation signals resistance to federal preemption, the state’s own effort to regulate AI has reportedly been narrowed following pressure from the White House bagman, illustrating in real time the friction between state-led policymaking and federal pressure to keep AI regulation centralized. You know, “intergovernmental affairs.”

Tennessee: SB 2171 was the more ambitious “frontier model + child safety” bill. As introduced, it enacted the “Artificial Intelligence Public Safety and Child Protection Transparency Act.” In substance, it required large frontier developers to publish public safety plans addressing catastrophic risks, and required large chatbot providers to publish detailed child-safety plans, use third-party assessments, report serious incidents, and submit summaries of catastrophic-risk assessments to the Tennessee attorney general. The official bill summary says it covered catastrophic-risk mitigation, protection of unreleased model weights, child-safety incidents, confidential reporting, and civil penalties up to $1 million for a first frontier-developer violation and up to $3 million for later violations; chatbot providers faced lower penalties, with exclusive enforcement by the attorney general.

What makes Tennessee especially important is that it looks like a scaled-down frontier-safety bill disguised as a child-safety bill after White House pressure. Axios reported that White House officials contacted Tennessee lawmakers to push them to weaken or abandon the measure, and separately reported that during an April 7 hearing Sen. Ken Yager said the bill “was amended at the suggestion of the White House” after a call that morning. The official Tennessee bill page still reflects a bill centered on safety plans, incident reporting, and risk summaries, but the political story is that the original approach “mirrored” transparency proposals seen in states like California and New York and was narrowed under pressure.

The Tennessee bill’s policy significance is that it tried to create a state-level reporting and governance regime for advanced AI systems without going all the way to a licensing system. It was not just a chatbot-disclosure bill. It reached model-level risk management, internal governance, cybersecurity for weights, and mandatory incident reporting, while also building in redactions for trade secrets, cybersecurity, public safety, and national security, plus an equivalence mechanism for compliance with stricter federal rules. In other words, Tennessee was experimenting with a conservative version of frontier governance: disclosure-heavy, AG-enforced, and formally tied to child safety and catastrophic risk rather than broad substantive design mandates.

And that’s a big no-no to David Sacks and Adam Theirer.

Florida

But there’s no sunshine for cockroaches in the Sunshine State. Florida’s SB 482 was much narrower and more consumer-facing than other states. Florida’s “Artificial Intelligence Bill of Rights” would have prohibited certain government AI contracting involving entities tied to “governments of concern,” required bot operators to notify users they were interacting with AI, and imposed special protections for minors using “companion chatbot” platforms. The Florida Senate’s analysis says the bill would have required parental consent for minors’ chatbot accounts, parental controls over those accounts, deletion of personal information after account termination, recurring notices that the chatbot is artificial and not human, and “reasonable measures” to prevent harmful outputs to minors. It also gave the Department of Legal Affairs enforcement authority, with civil penalties up to $50,000 per violation and a private damages provision for knowing or reckless violations affecting minors.

Florida’s political story is almost the inverse of Tennessee’s. Tennessee involved pressure to amend a bill that still moved. Florida involved a governor-backed bill that passed the Senate and but the White House killed because the House would not take it up. Axios reported that Gov. Ron DeSantis backed SB 482, that the bill clashed with Trump’s executive order favoring federal control of AI policy, and later that Florida House Speaker Daniel Perez would not bring it up and “shares the White House’s view on state AI laws.” The official Florida bill page confirms that SB 482 passed the Senate on March 4 and then “Died in Messages” in the House on March 13.

Did you think David Sacks was temporary? Think again.

The Revenge of the Sacks

If this is how the fight over AI guardrails plays out, it is hard not to ask what comes next. The same logic that was used to push back on state AI bills—federal leverage, quiet calls, alignment pressure—maps almost perfectly onto the next frontier: the physical infrastructure that makes AI possible.

Data centers are already driving massive buildouts of power, water, and land use across the country, and they implicate everything from local zoning to transmission corridors to ratepayer exposure. If the strategy is to prevent states from slowing or shaping AI development, then it would not be surprising to see that pressure migrate from software policy to infrastructure approvals. In that light, the Missouri and Tennessee episodes may not be isolated skirmishes but early signals of a broader campaign.

Call it the revenge of the Sacks: what could not be secured in Congress gets pursued through the states, not just in abstract regulatory frameworks, but in the concrete decisions about where facilities get built, how they are powered, and who bears the cost. And by the time the public sees the results—another hyperscale campus breaking ground, another high-voltage line cutting across a landscape—the real decisions may already have been made, quietly, in conversations most people never hear.

So these bills tell you something important about the White House strategy. Tennessee targeted frontier-model governance through safety plans, risk disclosures, and incident reporting; Florida targeted consumer protection and child safety through chatbot notices, parental control, and platform duties. The White House posture appears hostile to both categories, but the tactics differ: in Tennessee the pressure seems aimed at narrowing a still-live bill; in Florida it aligned with House leadership to let a Senate-passed bill die. That suggests the administration is not just resisting one specific flavor of AI regulation. It is resisting state experimentation across the board, whether the state frame is existential risk, child safety, transparency, or consumer rights.

As reported by Elizabeth Troutman Mitchell in the Daily Signal:

“It will happen in every state,” a source close to AI policy told The Daily Signal.

“We are proud of the President’s National AI Framework,” a White House official told The Daily Signal. “The Trump Administration is eager to work with partners who will help us implement that policy and achieve a comprehensive AI framework that serves all Americans. This approach will protect children, prevent censorship, respect intellectual property, and safeguard communities while ensuring America remains the undisputed leader in AI and technological innovation.”